This is (was?) a short post, to collate some of the work I’ve been doing over the last few months, to better understand the environmental footprint of using various generative AI tools, particularly for coding. I’ve already written a little about the tension between AI the tool and AI the project – generative AI is a new piece of technology, which (putting it mildly) has a somewhat chequered origin, and I plan to write more about that soon as well. But for now, the goal of this post is to introduce readers to some of the alternatives to default AI labs’ products that you can use right now in your day today, that at least give you a degree of visibility into their energy consumption and carbon associated emissions.

Before I go any further on this, I need to reiterate: not using AI at all is totally valid as a choice and often the right decision to make when trying to solve a range of technical problems – when people make generative AI models, they are usually optimising the model for versatility ahead of efficiency, and this shows in their energy consumption figures. This post is not saying you should automatically reach for generative AI tools to solve technical problems, and it’s understandable to not want to use generative AI tools in your day job. People should not be forced to use these tools if they do not want to, particularly by bosses who do not understand the technology themselves.

But if you are going to use these tools, then I think it does make sense to understand that there is an environmental footprint associated with using them, just like there is an environmental footprint associated with basically anything else we do. As responsible professionals, we ought to have at least some kind of intuition about the magnitude of the impacts of their use, so we can understand the trade-offs we make in our day-to-day work, and where we choose to focus our efforts when trying to build more sustainable digital services.

Up until recently this was quite hard to actually do, because the largest providers of AI services, the big AI labs, had not been sharing much in the way of information you could really use to change how you use the generative AI services they offer.

Most of the time you would either be forced to rely on well meaning calculations based on educated guesses – or assumptions that open models worked enough like proprietary models that their resource footprint would be similar. Alternatively, people might try to model impact by extrapolating upwards from extremely selectively chosen disclosures coming from the AI labs creating the models. This piece from Sasha Luccioni and Theo Alves Da Costa on the Hugging Face blog, gives a lot of helpful context about why this problematic.

Anyway, towards the end of last year things have started to change, with some AI inference services available from vendors that do actually disclose energy and carbon emissions information, in a way that you can use. You can now use this to inform a) the design of services and b) a decision about whether it makes sense to use generative AI at to solve a given problem.

I’ll use the rest of this post to run through the simplest options I have found, for common uses of generative AI.

I’m not going to engage too much in the subject of whether these numbers are large or small – that’s the subject of a future post.

TLDR – you can get direct energy readings now, and there are multiple companies offering this kind of inference service.

There are now hosted services you can use, that share energy consumption figures based on readings read directly off the hardware during AI inference, as part of the response in an API request. In Europe, the company the furthest along is GreenPT. In the US, it’s Neuralwatt. You can use them in agentic coding, in data science and in some cases chat GPT style chat tools. It’s not expensive to start, and the open models they make available are often viable alternatives to proprietary frontier models, at better prices.

Ok, with that out the way, now for the more detailed version, with examples.

For using ChatGPT style services

There is only one provider I have found that discloses the energy consumption of using the common “chat” style interfaces made popular by ChatGPT, Google Gemini and “vanilla” Claude from Anthropic, in the actual user interface itself. It is a product called GreenPT, from the company of the same name, based in the Netherlands.

At a very high level, I think what they’re doing is using a lightly customised open source project called Open Web UI as a starting point, which then surfaces energy consumption information from a bare metal machine on European cloud provider Scaleway. They appear to have added various extra instrumentation on the machine executing the AI inference, and they share a little about the approach they take on their documentation pages.

This allows them to show the environmental footprint as you use a chat, based on the measured energy consumption on the hardware, which they then convert into a CO2 figure. They also provide some helpful pointers on using the UI, both to get better results, but also to avoid burning through loads of needless tokens, and by extension energy. You can see a screenshot below:

I haven’t found any other providers that do this yet – while it clearly does require some domain expertise to build a user interface like this, GreenPT is currently a very small company. When you look at the funding available to larger labs, if people are not disclosing similar kind of information, it’s not because this is beyond their technical capabilities – it’s because they don’t want to.

For using the kinds of APIs that you normally use from OpenAI

What about actually writing code with GenAI though? I have found two providers, so far, who provide hosted AI inference services, in a way that is compatible with the OpenAI API – the de facto standard now for lots and lots of usage – who also expose energy consumption figures.

In Europe, once again we have GreenPT, who also provide inference APIs, that are largely compatible with the code written for the OpenAI API. They have a fast growing range of APIs, and you can do quite a bit more than just chat now.

Anyway, every time you make an API request, you get the payload in your response as usual, but as well, you get information about the energy being consumed to serve that request and the carbon emissions.

Green PT have pretty good docs for using their service, and once I figured out how their API works, I created an unofficial plugin to Simon Willison’s stellar llm python package, that stores the energy consumption on a local SQLite database, to allow for local analysis.

The plugin I created is available on PyPi, so you can try it out yourself. You can see the code on Github, and I have documented how it works as well for people who want to take this idea and run with it in their own projects.

In the US, Neuralwatt have a similar service available. And yes, there’s an llm plugin for that on Pypi too. After publishing these, I learned that Neuralwatt now have an open source repo of their own with a bunch of recipes and examples, and their own LLM plugin. I’ll be honest, it’s a bit more polished than my one was.

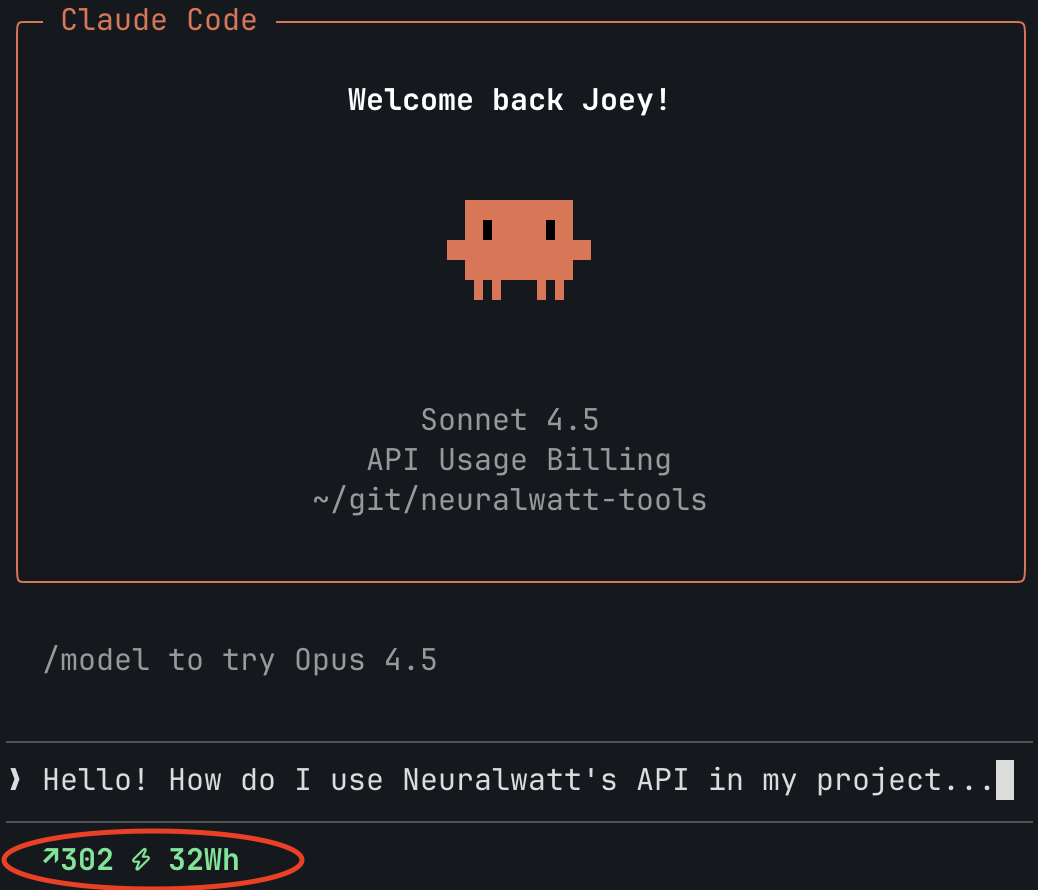

It shows numbers in the text UI when you’re using llm on the command line – something I hadn’t got round to – and after looking through the code, the approach is very similar to the one I took. Here’s screenshot of what to expect when using it:

The repo for all their code is open source with a permissive Apache 2 license.

Why are these interesting?

These might be helpful if, like me, you have been looking for examples of services that expose the kinds of numbers that the bigger providers so far have been unable, or unwilling to share themselves. For me, one of the things they demonstrate is that disclosing this information is clearly possible. We should expect it.

Both services I’ve mentioned here also provide a dashboard on the back end to show you aggregated figures over time as well, broken down by API key – this allows you to track the impact of specific projects, and if you have sustainability goals, implement team carbon/energy budgets, tracking progress over time.

In this respect, Neuralwatt goes quite a bit further than any other company I’ve seen when it comes to surfacing energy consumption figures – they actually charge for inference by the kilowatt hour. This is radically different to how most providers sell AI inference, because it’s essentially leading with the key thing every large company has been trying its best to avoid sharing – I am a big fan of this approach.

For assisting when you are using notebooks for research.

Because these providers are largely extending the existing OpenAI API, this means that you can get a better idea of your day-to-day use if you are using generative AI on other tasks and tools.

I have been a big fan of the Marimo python code notebook, since I discovered it a year and a half or so ago. One of the features they offer is the use of a generative AI code assistant, to help you describe something that you want in plain English, and then have Marimo suggest the code that you can try out in a new cell.

This can be very handy if you don’t work daily with say… the Pandas dataframe API, or any successors like Polar’s own API and so on.

Now that Marimo has new ‘custom provider’ support for AI inference providers, adding providers like GreenPT or Neuralwatt is pretty straightforward. I’ve created some code samples to show how to add support for them in this github gist, for you to try yourself.

For agentic / vibe coding sessions

If you are a software developer in 2026, then it’s likely you know someone who is using Claude Code, OpenAI Codex, Google AntiGravity, or Github Copilot to automate the process of writing code.

The easiest way I know to do agentic coding like this and also understand the energy consumption associated with doing so, is use the open source OpenCode project, again with one of these two providers as custom providers. I do feel a bit silly naming these two providers again and again, but basically, there is no one else I have found out there doing this yet – if you have found any others, please do let me know.

Using ‘real’ coding models

If you see people using agentic coding tools, You will see people raving about Anthropic Claude Opus, as a coding model, that is scarily good.

Neuralwatt very recently added support for the very powerful Kimi 2.5 open weights model, which is about as close to Claude Opus as we are likely to get before the next DeepSeek model is released.

I’m not quite sure how, but they also seem to have figured out how to get Claude Code to work with their inference service too:

See more in the “recipes” section of their Neuralwatt tools repo on Github.

GreenPT, by comparison, have the latest coding models from European AI lab Mistral, Devstral 2. They have explicit instructions for how to set up OpenCode, and as you can see, it’s not that complicated – it’s about as complicated as adding another custom provider, and is likely something you can try out in less than 15 minutes if you have used OpenCode before.

Update: After some back end forth chatting with the folks at NeuralWatt, we got got a fork of Opencode running while exposing the energy numbers! Here’s a vide below:

You can read more here about the implementation notes, and we’re now looking into an extension of the new openresponses spec, to have a standardised way to expose metrics.

If you want to learn more

This isn’t the only way to get meaningful numbers about the direct energy impacts of AI, then projects like AI Energy Score are worth your attention too. This post is already way longer than I planned, so I’ll have to cover other projects another time,

As part of my day job at the Green Web Foundation, I host a podcast called Environment Variables (geddit?), produced by the confusingly similarly named Green Software Foundation (a larger industry body focused on… well… Green Software). There, I explore various aspects of sustainability in building digital services, with people working in the field, and researching the topic. I have carried out what I hope are accessible, but still usefully technical interviews with people working for both of these inference providers about how people surface these energy consumption numbers in live services.

The most recent was the one I did with Wilco Burgerraf and Robert Keus at Green PT earlier this year. I also spoke to Scott Chamberlin at Neuralwatt back in August last year as well – there we covered a lot of other topics like his time at Microsoft implementing carbon measurement there, internal carbon pricing for digital services, and more.

I’ve done lots of other interviews that may be useful for people curious about the topic in that podcast (note: I am not the only host on that podcast).

If you want to speak to me about any of this stuff, or work together on something, probably the best way to get in touch is via my day job these days at the Green Web Foundation. Here’s the contact page.